The real issue was never AI or “automatically generated content” itself. Google penalizes the same thing it always has: content that is thin, unhelpful, and spammy. AI just makes it much easier to create that kind of content at scale. That is important to say clearly, because the two often get mixed together.

In this post, I’ll walk you through seven reasons why AI content isn’t an SEO risk, and why I don’t think it ever will be.

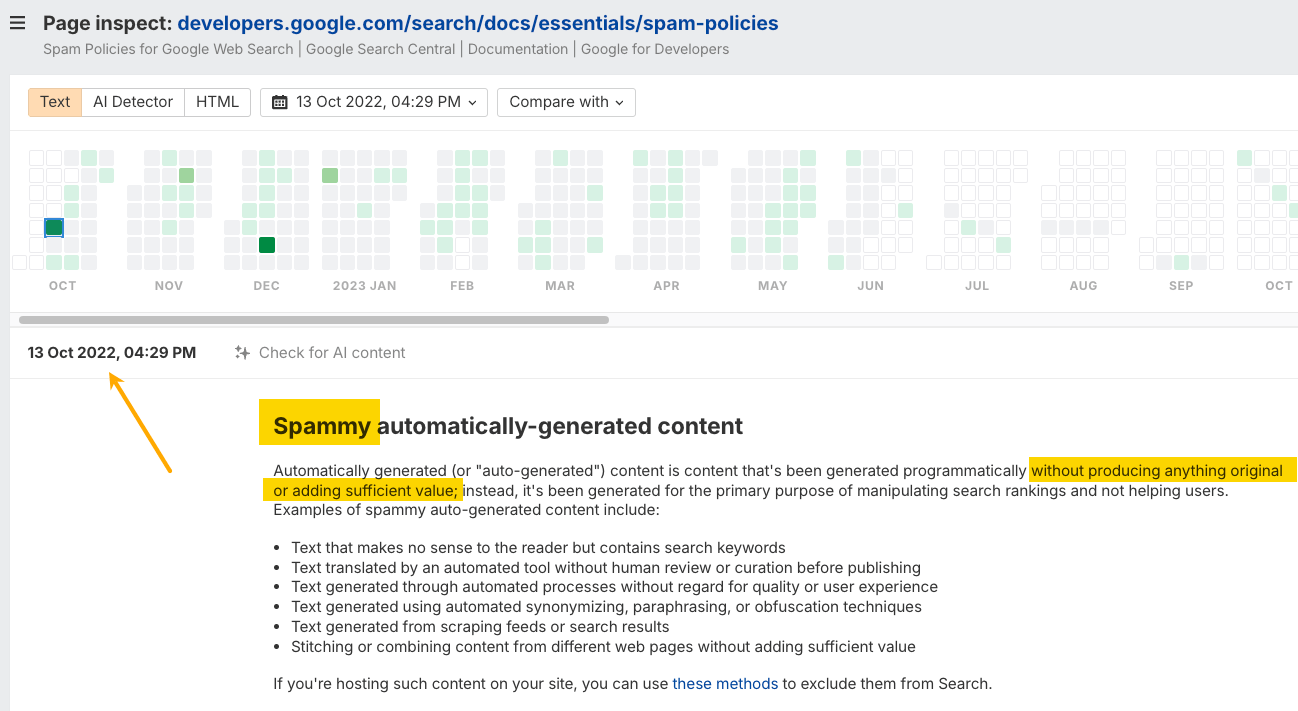

Before AI-generated content was a thing, Google talked about “automatically generated content.” But even then, it was never penalized solely because of how it was created.

For example, Wise has a directory of currency conversion pages that are generated automatically. Even though this content is programmatic, it hasn’t been penalized because it isn’t spam—and it still performs very well in organic search.

The same anti-spam argument applies to AI-generated content. In Google Search’s guidance about AI-generated content, we find this:

When it comes to automatically generated content, our guidance has been consistent for years. Using automation—including AI—to generate content with the primary purpose of manipulating ranking in search results is a violation of our spam policies. (…) Appropriate use of AI or automation is not against our guidelines. This means that it is not used to generate content primarily to manipulate search rankings, which is against our spam policies.

Google’s guidelines target quality, not production method.

And it makes total sense. AI has helped make serious breakthroughs in science and medicine. It would be absurd to ban the same technology from helping with the write-up.

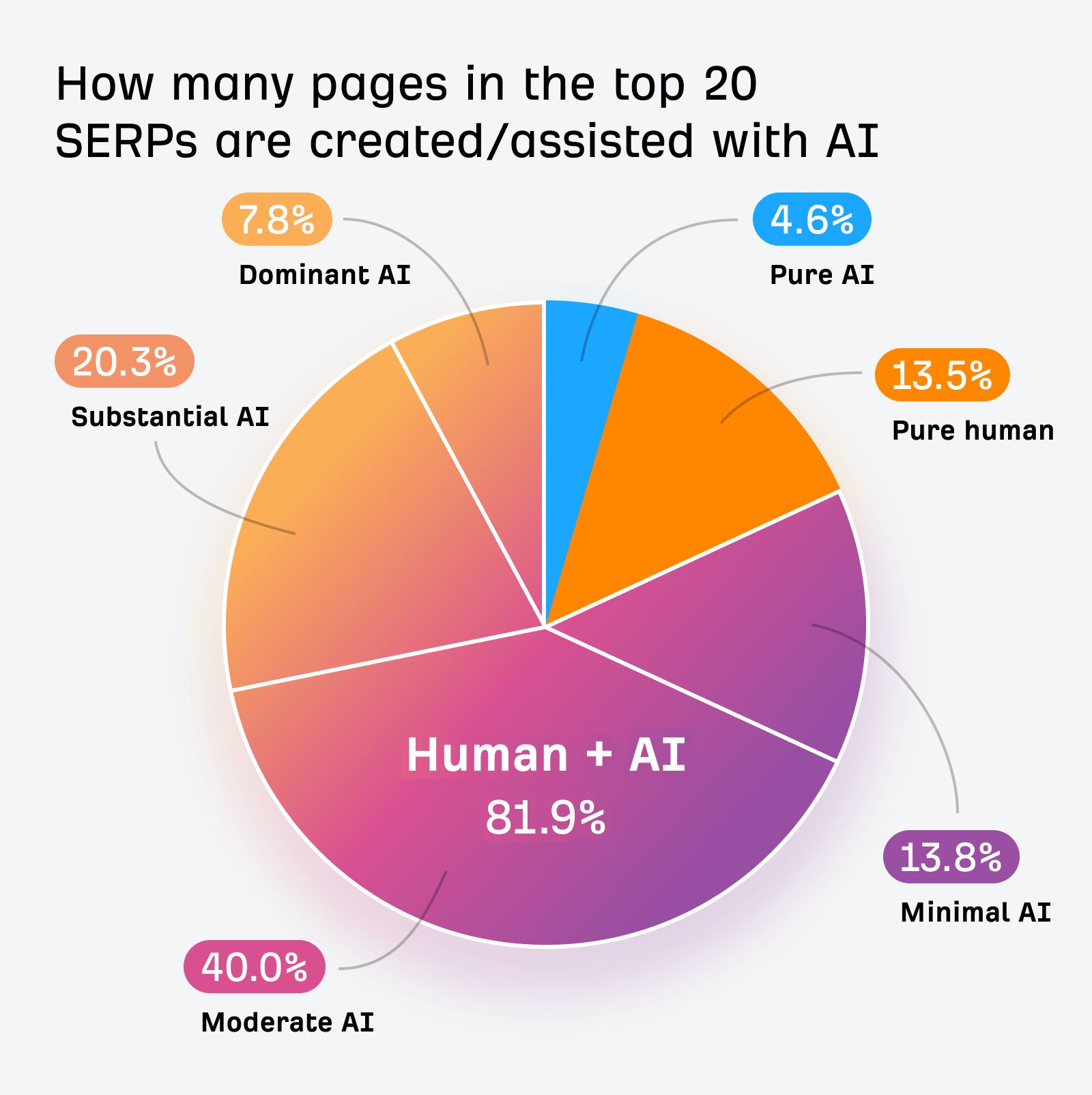

We studied this last year. Based on 100,000 random keywords from Keywords Explorer and the AI content detector built into Site Audit, we found that only 13.5% of the top 20 ranking pages were “pure human.” 81.9% included some form of AI assistance, and 4.6% were fully AI-generated. Out of that 81.9%, most were moderately to heavily AI-assisted.

I use AI in my writing, too. I wrote all about it in this guide to AI content. I have articles that were +90% AI generated in some form of experimentation, which, to my surprise, always ranked somewhere on the first page:

If you want to see whether your competitors use AI in their top-ranking content, you can check it in Site Explorer. Just enter the domain, open the Top pages report, and look at the AI Content Level column on the right.

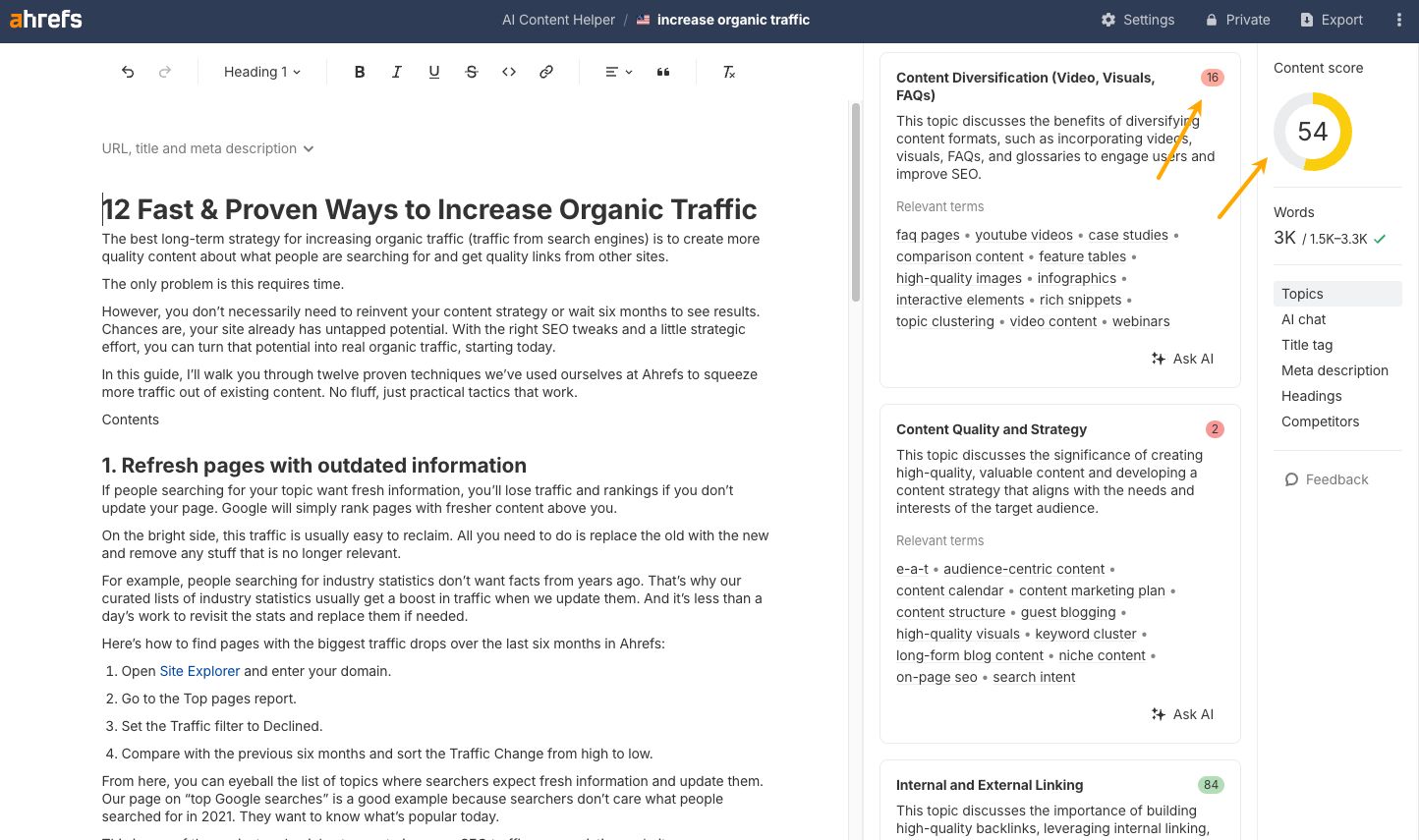

By the way, you don’t need to be a full-on AI enthusiast to take advantage of AI in your content. For example, Ahrefs’ AI Content Helper grades your writing against top-ranking pages, flags topical gaps, and shows you the subtopics you need to cover to get surfaced in both traditional search and AI results. Think of it less as an AI writer and more as an editor that knows what Google and AI chatbots are looking for.

If Google ever penalized AI-generated content, it would be hypocritical beyond measure, considering it’s already one of the biggest producers of AI content on the web today.

It’s a good moment to realize that:

- AI Overviews pull from everyone’s pages and rewrite the answers in Google’s own words (using Gemini). Last year, AI Overviews already appeared on 20.5% of all SERPs.

- AI Mode generates entire conversational responses.

- They’ve been using AI to rewrite title tags and meta descriptions in search results for years.

- Gemini, their own AI assistant, generates content on demand for millions of users daily.

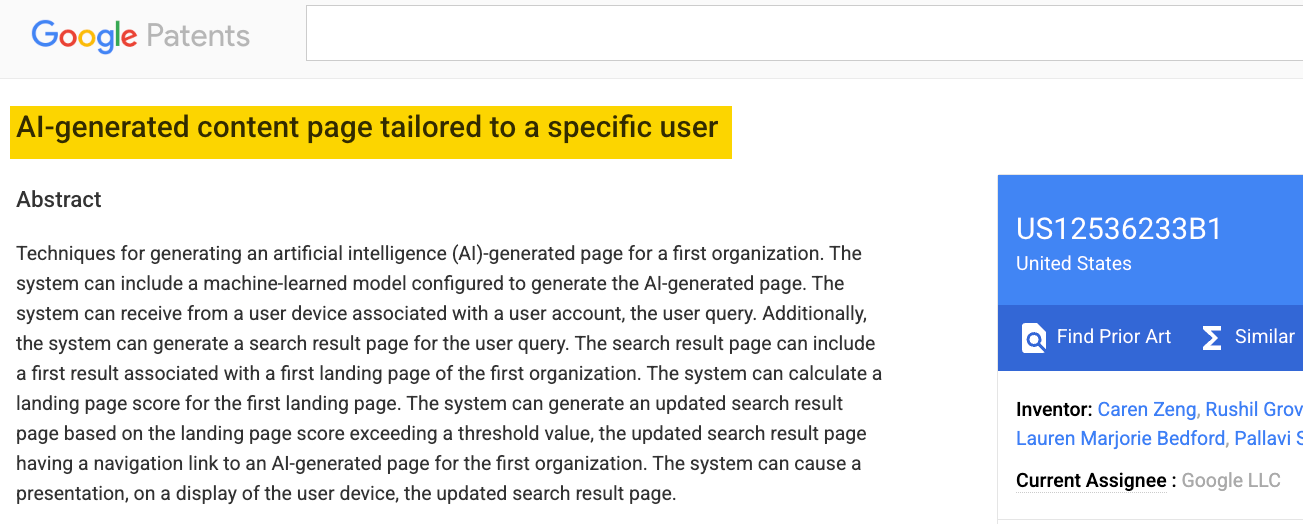

And if you ever thought Google was somehow ideologically against AI content, that may change after you check out some of their AI content patents. For example, this new patent suggests Google may replace your own landing pages for shopping and ads.

Google Docs, Gmail, Notion, Grammarly. Nearly every writing tool has AI built in now. The line between “AI content” and “AI-assisted content” has collapsed entirely.

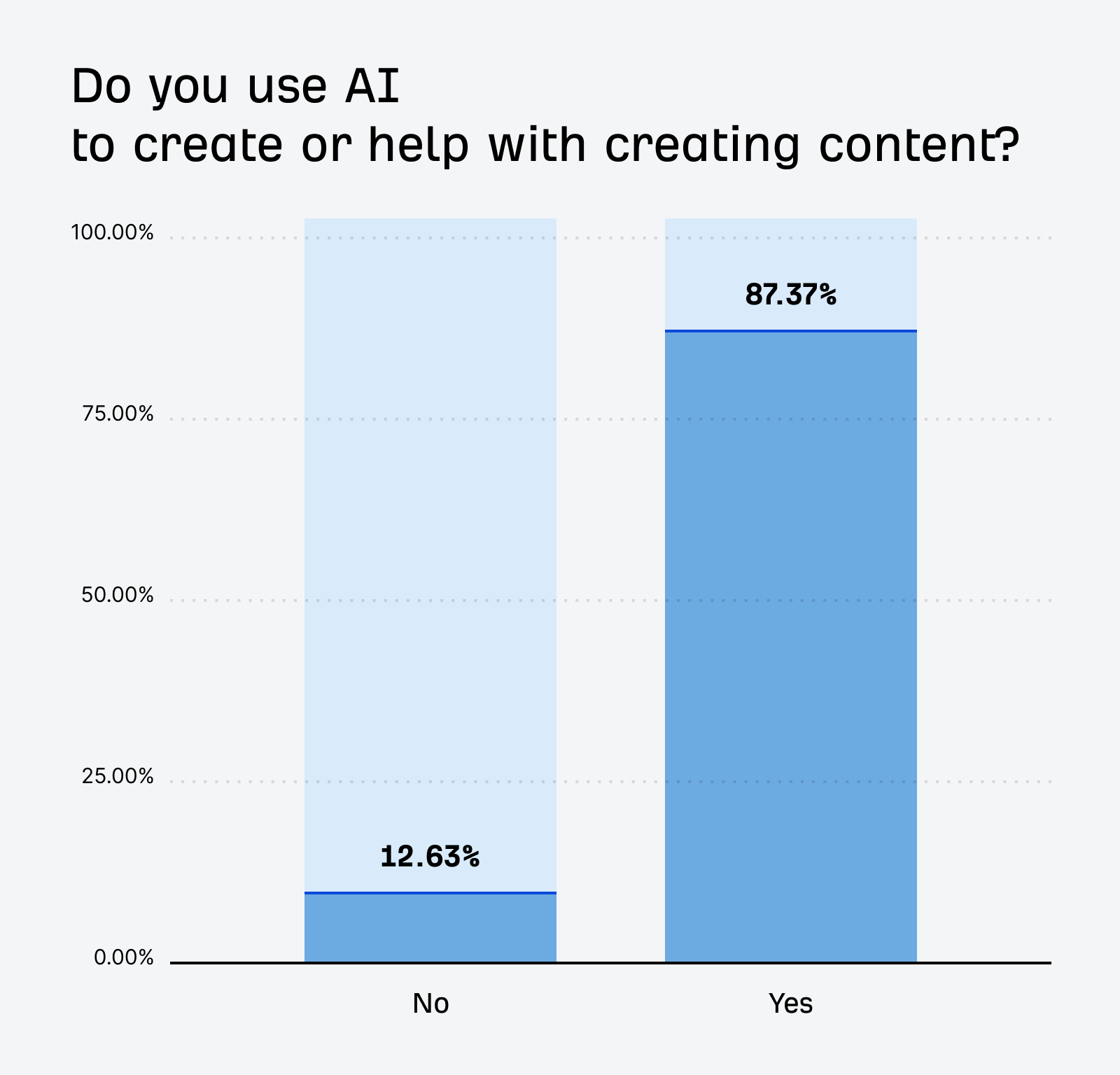

And do you know how many content marketers use AI to make content? Last time we checked, it was 87%.

This was last year. Claude didn’t even have a web search function then, can you imagine? So, I suspect this year the percentage is closer to 95%. And I wouldn’t be surprised if some people don’t even realize AI is somewhere in their content pipeline.

There is already so much AI content out there, and more is coming every day. It’s just how content gets made now. Penalizing it would mean ignoring most of the modern web, maybe even freezing it somewhere around 2025 or 2026.

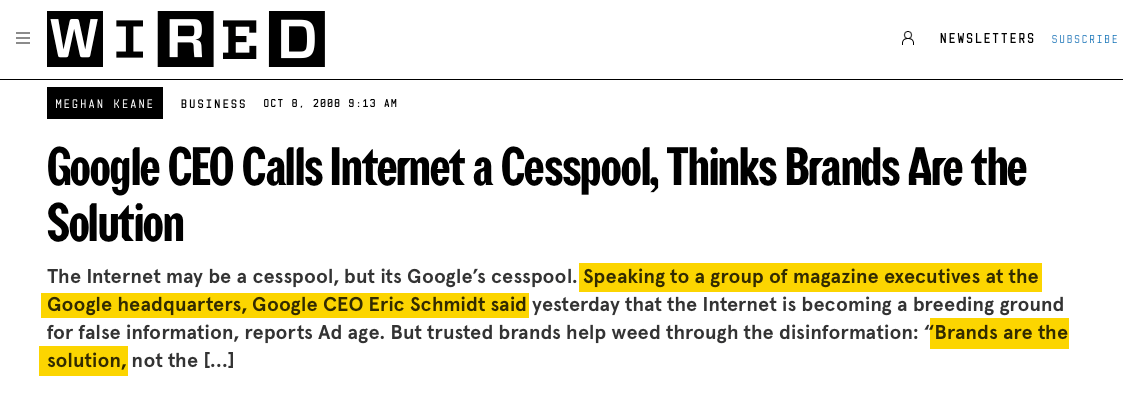

And it’s not just small publishers cutting corners. Remember when Google said that “brands are the solution” to showing quality content on the SERPs?

Well, the problem now is that more and more of those very brands run on AI content.

If your competitors are already using AI to produce more content, faster, and optimized for both traditional search and AI citations, not using AI isn’t taking the high road. It’s falling behind.

It’s Cold War logic with a Red Queen’s race twist. Once one side escalates, everyone else has to match or lose ground. But even the brands that adopt AI aren’t pulling ahead. They’re running as fast as they can just to “stay in the same place”.

Comparing human and AI content based on who wrote it is the wrong lens entirely. What matters more is whether the content does its job.

Think about a page explaining how to open a door (real page, by the way). A human and an AI would describe it the same way, right? Grab the handle, turn, push or pull. There’s no “human touch” that makes those instructions better. Either it helps the reader open the door, or it doesn’t.

Nobody reads a step-by-step tutorial on setting up Google Analytics and thinks, “but was this written by a person?” They think, “did this solve my problem?”

And when you evaluate content on that basis, human content fails all the time. Plenty of human-written pages are thin, outdated, useless, or simply bad writing.

Content farms employed thousands of real humans to produce millions of pages so bad that Google had to build an entire algorithm update, Panda, just to deal with the mess.

Google itself acknowledged this on the same page about AI-generated content:

About 10 years ago, there were understandable concerns about a rise in mass-produced yet human-generated content. No one would have thought it reasonable for us to declare a ban on all human-generated content in response.

Meanwhile, with the latest LLM models, AI content is consistently an 8 out of 10. Human content ranges from a 2 to a 10. That’s why AI writing in business took off so fast.

Even if Google wanted to penalize AI content, detection is far harder than it sounds, for three reasons.

- AI detectors are statistical models, not scanners: they assign a probability score, never a definitive verdict, and false-positive rates are significant.

- AI-generated text can be humanized through editing, which scrambles any detectable signal.

- Tools like Grammarly, which work by altering text in statistically detectable ways, mean that virtually every piece of edited writing now carries some AI fingerprint.

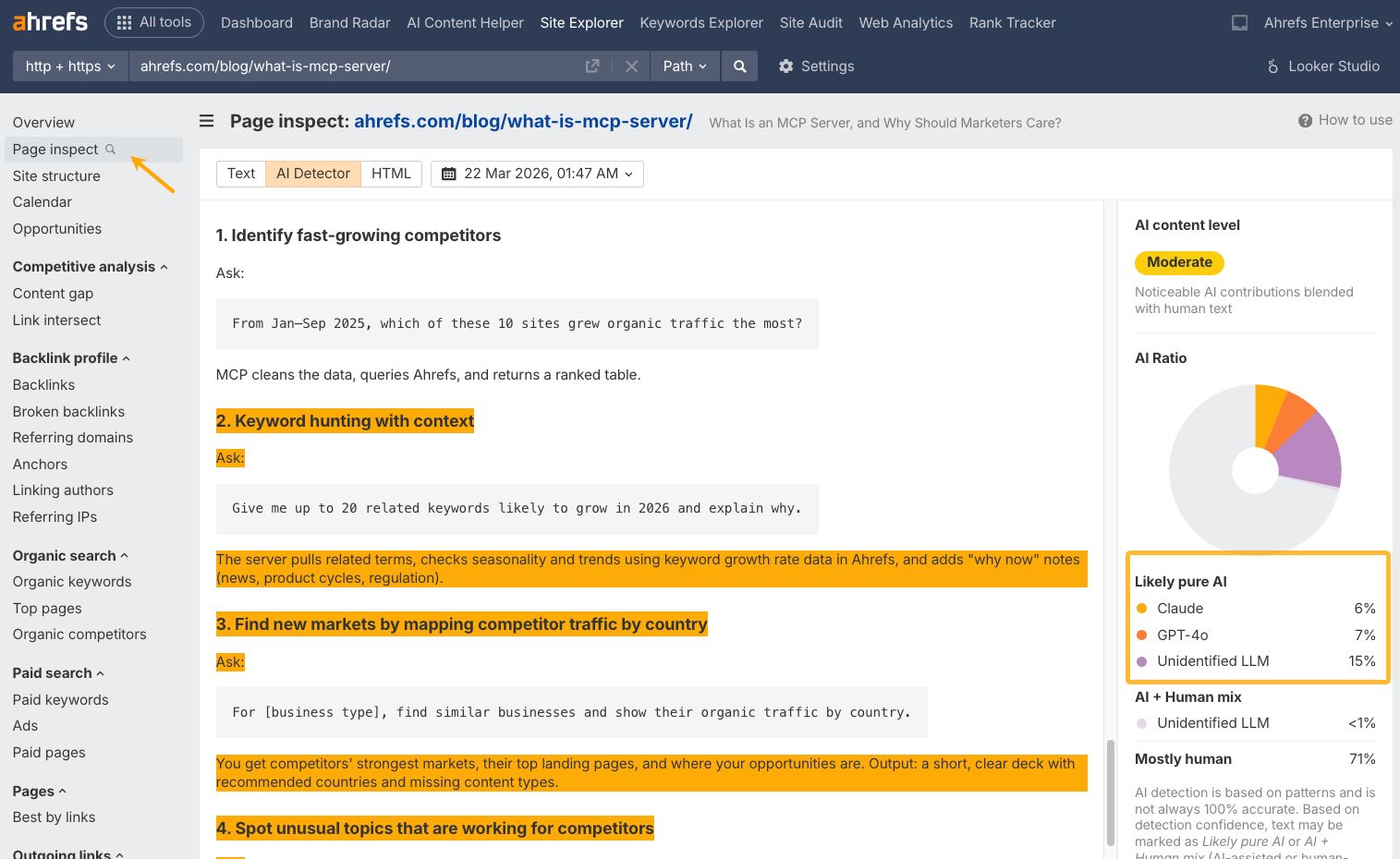

That said, AI detectors aren’t useless. Where they shine is competitive research, instead of a policing tool. For instance, Ahrefs’ AI Detector (in Site Explorer and Site Audit tools) lets you check how much AI content your competitors publish, which models they use, and how it performs in search. We tested it against seven others, and it came out on top.

To use it, go to Page Inspect in Site Explorer, open the AI Detector tab, and you’ll see a color-coded breakdown of which parts of the page are likely AI-generated.

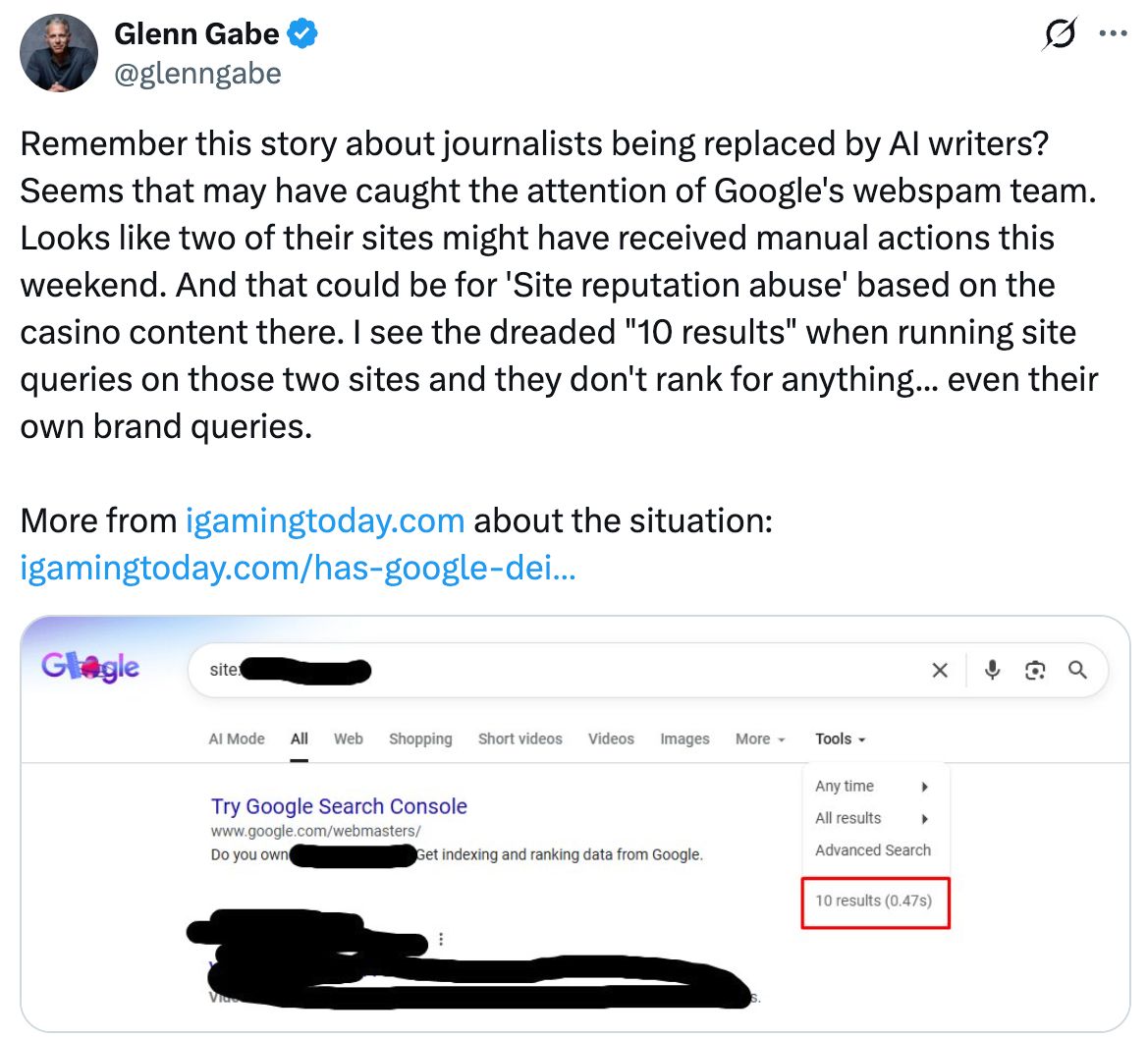

Yes, Google has issued manual penalties under its “scaled content abuse” policy, and some of those cases involved heavy AI use. But read the details, and a pattern emerges: the problem was never just using AI.

Take this recent example by Glenn Gabe: a site hit with a manual penalty for using AI to fake human writers—fake bylines, fake bios, fake expertise. That’s a deception penalty, not an AI penalty.

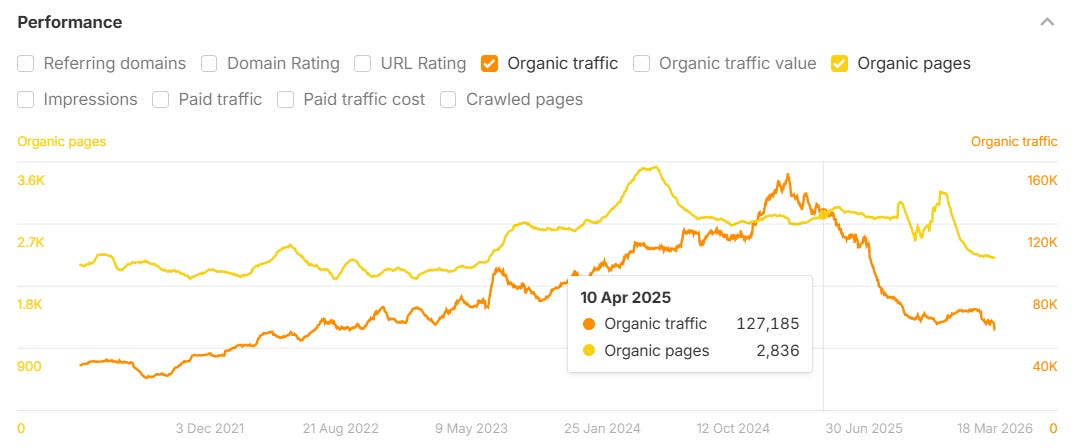

Some more examples. On Lily Ray’s Substack, which I highly recommend, you’ll find an entire gallery of sites that pushed out AI content so fast there’s no way it went through any meaningful human review, landing them squarely in Google’s Scaled Content Abuse policy. Same pattern: quickly up, and quickly down.

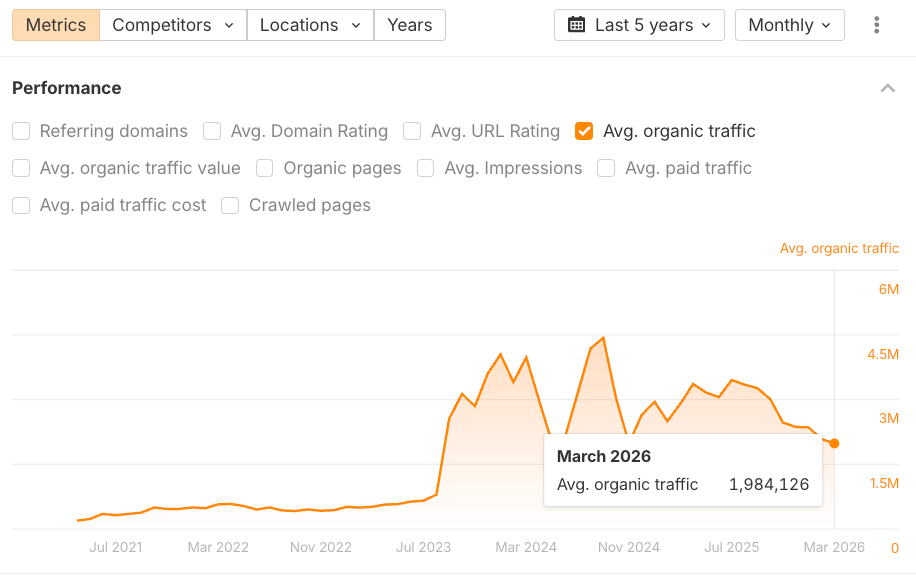

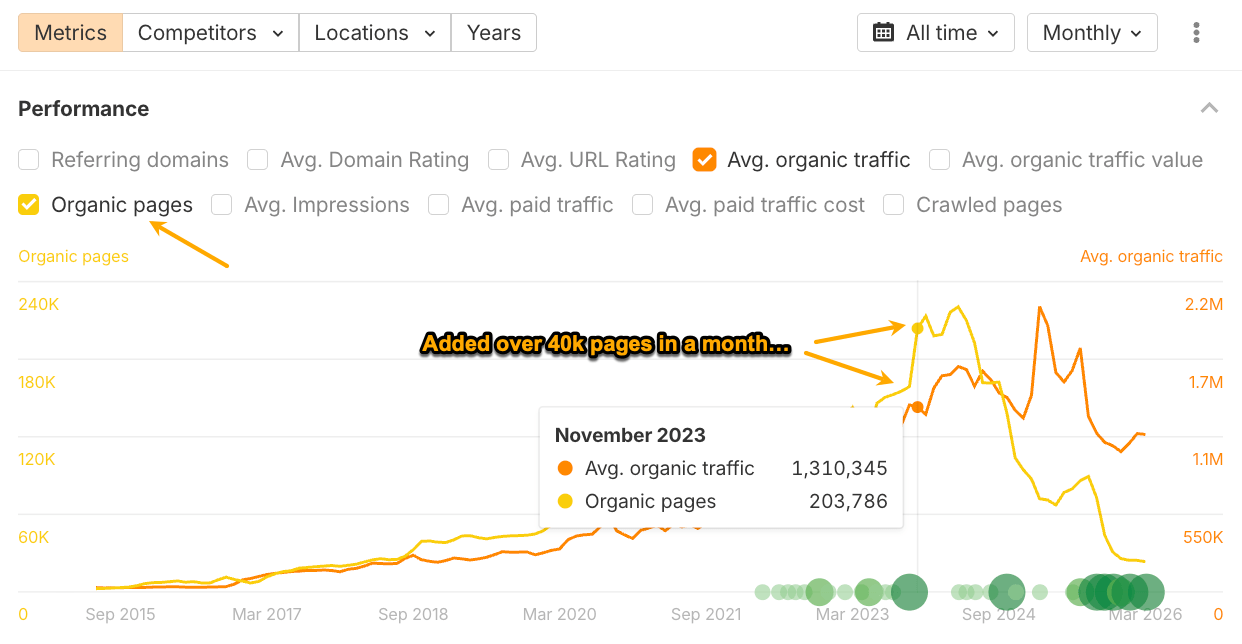

Here are a couple of examples from her latest article:

Lily used Ahrefs’ Site Explorer to get this data. You can do the same if you ever wonder whether a site relies on scaled content. Find the organic pages filter in the Overview report. The rapid growth of the yellow line is a telltale sign.

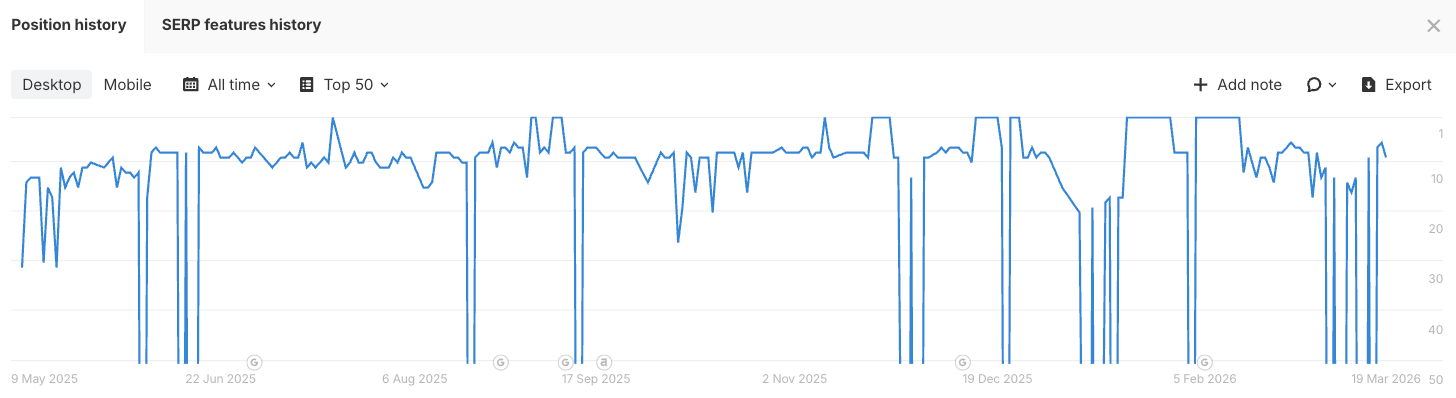

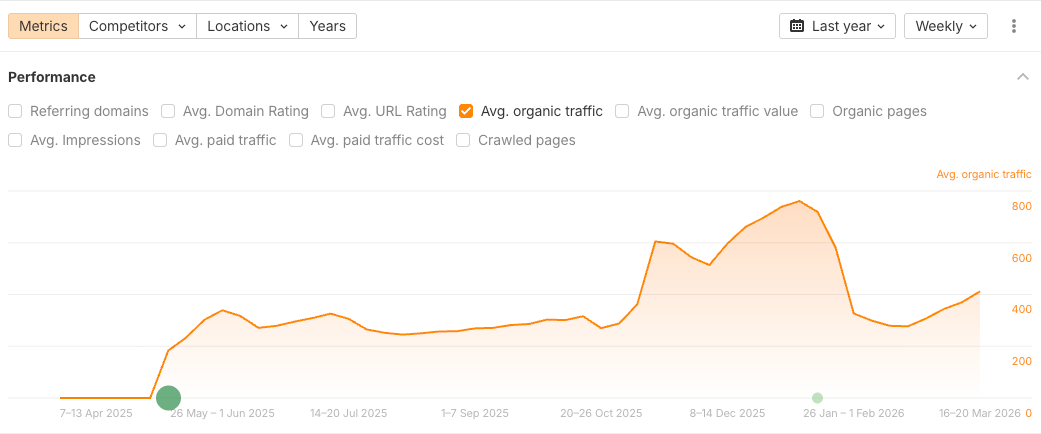

But in the meantime, my mostly AI-generated article does quite well for its traffic potential and looks like a typical organic search graph:

So, the popular consensus that “AI content gets you tanked” conflates the tool with the abuse. Google penalizes low-quality, deceptive, and spammy content. AI just makes it easier to produce that content at scale.

Nothing new. A shortcut works until Google catches up, and then it doesn’t.

Final thoughts

It was never about AI vs. human. It’s about helpfulness, topical depth, and whether you’re genuinely answering the query better than what’s already ranking.

And I think Google can’t afford to see it any other way. They use it in their own products, and most of the web already runs on it.

So the lesson is: don’t use AI to do the thing that’s always gotten sites penalized.

Thanks for reading! If you have any questions or comments, find me on LinkedIn.